OpenAI的Sora学习笔记

sora 什么时候开放

视频生成模型作为世界模拟器

我们探索在视频数据上进行大规模生成模型的训练。具体而言,我们联合训练可变长度、分辨率和宽高比的视频和图像的文本条件扩散模型。我们利用一个在视频和图像潜码的时空块上操作的Transformer架构。我们最大的模型Sora能够生成一分钟高保真度的视频。我们的结果表明,扩展视频生成模型是构建通用物理世界模拟器的有希望的路径。

这份技术报告重点介绍了两个方面:(1)我们将各种类型的视觉数据转化为统一表示的方法,从而实现大规模生成模型的训练;(2)对Sora的能力和局限性进行定性评估。报告中不包含模型和实现的详细信息。

许多先前的研究都探索了使用各种方法对视频数据进行生成建模,包括循环网络、生成对抗网络、自回归Transformer和扩散模型等。这些研究通常侧重于特定类型的视觉数据、较短的视频或固定尺寸的视频。Sora是一种对视觉数据进行综合建模的通用模型,它可以生成跨越不同时长、宽高比和分辨率的视频和图像,最高可生成一分钟的高清视频。

Turning visual data into patches

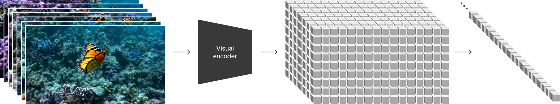

我们受到大型语言模型的启发,这些模型通过在互联网规模的数据上进行训练而获得了通用能力。语言模型的成功在一定程度上得益于使用优雅地统一了文本、代码、数学和各种自然语言的标记。在这项工作中,我们考虑了生成视觉数据模型如何继承这些好处。与语言模型使用文本标记不同,Sora使用视觉补丁(visual patches)。之前已经证明补丁是对视觉数据模型有效的表示形式。我们发现,补丁是在各种类型的视频和图像上训练生成模型的一种高度可扩展和有效的表示形式。

在高层次上,我们通过首先将视频压缩成低维潜空间,然后将表示分解为时空补丁,将视频转化为补丁形式。

Video compression network

我们训练了一个网络,用于降低视觉数据的维度。该网络以原始视频作为输入,并输出在时间和空间上都进行了压缩的潜在表示。Sora在这种压缩的潜在空间上进行训练,随后生成视频。我们还训练了一个相应的解码器模型,将生成的潜在表示映射回像素空间。

Spacetime Latent Patches

隐空间时空编码块

对于给定的压缩输入视频,我们提取一系列时空补丁,它们充当Transformer的标记(token)。这个方案同样适用于图像,因为图像只是单帧的视频。我们基于补丁的表示使得Sora能够在分辨率、时长和宽高比可变的视频和图像上进行训练。在推理过程中,我们可以通过在适当尺寸的网格中排列随机初始化的补丁来控制生成的视频大小。

Scaling transformers for video generation

Sora是一个扩散模型;给定带噪声的补丁输入(以及文本提示等条件信息),它被训练用于预测原始的“清晰”补丁。重要的是,Sora是一个扩散Transformer。Transformer在多个领域展现了卓越的可扩展性,包括语言建模、计算机视觉和图像生成。

在这项工作中,我们发现扩散Transformer同样在作为视频模型时能够有效扩展。下面,我们展示了在训练进行时,使用固定种子和输入的视频样本的比较。随着训练计算量的增加,样本质量显著提高。

Variable durations, resolutions, aspect ratios

过去的图像和视频生成方法通常会将视频调整为标准尺寸,例如256x256分辨率的4秒视频,通过调整大小、裁剪或修剪。然而,我们发现使用原始尺寸的数据进行训练会带来几个优点。

Sampling flexibility

采样灵活性

Sora可以生成宽屏1920x1080p的视频、垂直1080x1920的视频以及介于两者之间的任何尺寸。这使得Sora能够直接根据设备的原始宽高比创建内容。它还使我们能够在生成全分辨率之前,通过较低的尺寸迅速原型化内容,而所有这些都可以使用同一个模型完成。

Improved framing and composition

我们在实践中发现,以原始宽高比训练视频可以改善构图和画面框架。我们将Sora与将所有训练视频裁剪为正方形的模型进行了比较,这是在训练生成模型时常见的做法。在使用正方形裁剪训练的模型(左侧)有时会生成仅部分可见主体的视频。相比之下,Sora生成的视频(右侧)在构图方面有所改善。

Language understanding

语言理解

训练文本到视频生成系统需要大量具有相应文本标题的视频。我们应用了DALL·E 330中引入的重新标注技术来处理视频。首先,我们训练了一个高度描述性的标题生成模型,然后使用该模型为训练集中的所有视频生成文本标题。我们发现,在高度描述性的视频标题上进行训练不仅提高了文本的准确性,还提升了视频的整体质量。

和DALL·E 3类似,我们还利用GPT将用户提供的简短提示转化为更详细的长篇描述,并将其发送给视频模型。这使得Sora能够生成高质量的视频,准确地符合用户的提示。

Prompting with images and videos

以上所有的结果以及我们的首页上展示的都是文本到视频的样本。但是Sora也可以使用其他输入进行提示,例如预先存在的图像或视频。这种能力使得Sora能够执行各种图像和视频编辑任务,如创建完美循环的视频、为静态图像添加动画效果、将视频向前或向后延长等等。

Animating DALL·E images

制作DALL·E图像动画

Sora能够根据输入的图像和提示生成视频。下面我们展示了基于DALL·E 2和DALL·E 3图像生成的示例视频。

Extending generated videos

Sora还具备将视频向前或向后延展时间的能力。下面是四个视频,它们都是从生成视频的一部分开始向时间轴后退延展的。因此,这四个视频的起始各不相同,但最终都达到了相同的结尾。

我们可以利用这种方法将视频向前和向后延展,以生成一个无缝的无限循环。

Video-to-video editing

视频到视频编辑

扩散模型为基于文本提示的图像和视频编辑提供了大量的方法。下面我们将其中一种方法,SDEdit,应用于Sora。这种技术使得Sora能够在零样本情况下转换输入视频的风格和环境。

Connecting videos

连接视频

我们还可以使用Sora在两个输入视频之间逐渐插值,创建在完全不同主题和场景构成的视频之间的无缝过渡。在下面的例子中,中间的视频在左右两边对应视频之间进行插值。

Image generation capabilities

Sora还能够生成图像。我们通过在空间网格中排列高斯噪声的补丁,并具有一个帧的时间范围来实现这一点。该模型可以生成具有可变尺寸的图像,最高分辨率可达到2048x2048。

Close-up portrait shot of a woman in autumn, extreme detail, shallow depth of field

Close-up portrait shot of a woman in autumn, extreme detail, shallow depth of field 秋天里一位女性的特写肖像,极致细节,浅景深

Vibrant coral reef teeming with colorful fish and sea creatures

Vibrant coral reef teeming with colorful fish and sea creatures 充满活力的珊瑚礁,挤满了五彩缤纷的鱼类和海洋生物

Digital art of a young tiger under an apple tree in a matte painting style with gorgeous details

Digital art of a young tiger under an apple tree in a matte painting style with gorgeous details 数字艺术:一只幼年老虎在苹果树下,采用哑光绘画风格,细节华丽

A snowy mountain village with cozy cabins and a northern lights display, high detail and photorealistic dslr, 50mm f/1.2

A snowy mountain village with cozy cabins and a northern lights display, high detail and photorealistic dslr, 50mm f/1.2 一个雪山村庄,有着舒适的小木屋和北极光展示,高清晰度和逼真的数码单反相机,50mm f/1.2镜头拍摄。

涌现的模拟能力

我们发现,在大规模训练的情况下,视频模型展现出许多有趣的新兴能力。这些能力使得Sora能够模拟物理世界中人、动物和环境的某些方面。这些特性不需要任何显式的归纳偏置来处理3D、对象等问题,它们纯粹是规模的现象。

Sora能够生成具有动态相机运动的视频。随着相机的移动和旋转,人物和场景元素在三维空间中保持一致的运动。

视频生成系统面临的一个重要挑战是在采样长视频时保持时间一致性。我们发现,Sora通常能够有效地建模短期和长期的依赖关系,尽管并非总是如此。例如,我们的模型可以在人物、动物和物体被遮挡或离开画面时保持它们的存在。同样地,它可以在单个样本中生成同一角色的多个镜头,并在整个视频中保持它们的外观。

Sora有时可以模拟以简单方式影响世界状态的动作。例如,一个画家可以在画布上留下持久的新笔触,或者一个人可以吃掉一个汉堡并留下咬痕。

Sora还能够模拟人工过程,其中一个例子就是视频游戏。Sora可以在保持高保真度的同时,通过基本策略同时控制Minecraft中的玩家和渲染世界及其动态。通过提示Sora使用包含"Minecraft"的文本描述,可以激发这些能力,而无需进行任何训练。

这些能力表明,持续扩展视频模型是开发高度功能强大的物理世界和数字世界模拟器,以及其中的物体、动物和人类的有希望的路径。

Discussion 讨论

目前,作为一个模拟器,Sora展示了许多限制。例如,它无法准确地模拟许多基本交互的物理现象,比如玻璃破碎。其他交互,比如吃东西,不总是产生正确的物体状态变化。我们在首页上列举了模型的其他常见失败模式,比如在长时间样本中产生的不一致性或物体的突然出现。

我们相信,Sora目前展示的能力表明,持续扩展视频模型是开发功能强大的物理世界和数字世界模拟器,以及其中的物体、动物和人类的有希望的路径。